From Solo Prompts to Team Superpowers: Scaling AI with Shared Instructions

The senior developer leaned back in her chair, watching the new hire struggle with the same AI prompt she'd seen three other teammates wrestle with that week. "Try asking it to include error handling and logging," she called across the room. The junior dev nodded, retyped the prompt, and got better results. But tomorrow, someone else would hit the same wall. Again.

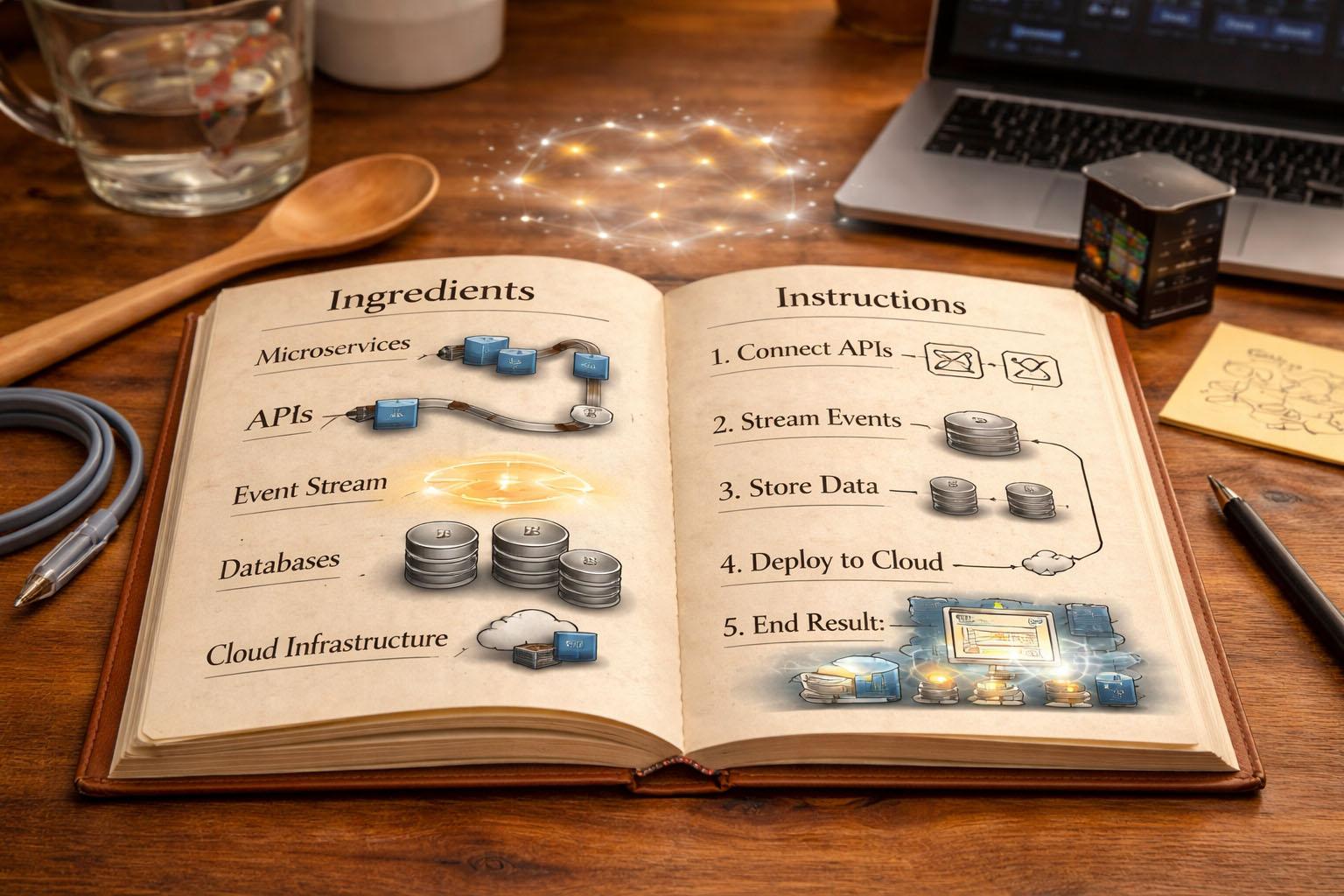

This scene plays out in engineering teams everywhere. Organizations invest in AI coding assistants, developers craft brilliant prompts through trial and error, and then... those hard-won insights evaporate. Each person optimizes their own workflow in isolation, rediscovering the same lessons, making the same mistakes, and painfully slowly building expertise that never escapes their individual context. It's like having a team of chefs who refuse to share recipes.

The Invisible Productivity Leak

Here's the friction point: AI tools have democratized access to coding assistance, but they've also created a new form of knowledge fragmentation. Your team's collective intelligence about how to work with AI remains scattered across Slack threads, mental notes, and individual prompt histories that disappear the moment someone closes their laptop.

Kent Beck once observed that "making the invisible visible" is fundamental to software improvement. Right now, your team's AI prompting strategies are invisible and therefore impossible to systematically improve. When every developer maintains their own private collection of prompts, you're actively preventing your team from developing a shared language and approach to AI-assisted development.

The stakes are higher than you might think. AI is becoming integral to documentation, testing, architecture decisions, code reviews, and deployment strategies. Without shared instructions, you're essentially running parallel experiments where everyone's working from a different playbook.

Think of It Like Infrastructure as Code (But for AI)

Remember when deployment scripts lived in someone's head? Or when "the way we configure production" was tribal knowledge passed down through screen-sharing sessions? Infrastructure as Code solved that by making configurations explicit, versioned, and shareable.

Curated shared instructions do the same thing for AI interactions. Instead of each developer maintaining their own mental model of "how to ask AI for good test cases," you codify it once, version it, and everyone benefits. When someone discovers that adding "consider edge cases for null inputs and concurrent access" dramatically improves test generation, that insight becomes team knowledge immediately at the moment they commit the update.

Think of shared instructions as your team's AI playbook: a living document that captures what works, what doesn't, and why. Just as you wouldn't let each developer maintain their own private fork of your build configuration, you shouldn't let AI interaction patterns remain siloed.

The Anatomy of Shared AI Instructions

Shared instructions come in two primary flavors, each serving distinct purposes in your delivery pipeline.

Repository-Level Instructions

The most powerful approach for development work is committing instruction files directly to your project repository. The AGENTS.md file has emerged as a de facto standard, though you'll also see .cursorrules, .windsurfrules, or similar variants depending on your tooling.

What belongs in repository instructions:

| Category | Examples | Why It Matters |

|---|---|---|

| Code Style | Naming conventions, formatting preferences, architectural patterns | Ensures AI-generated code matches your team's standards |

| Domain Knowledge | Business rules, technical constraints, legacy system quirks | Prevents AI from making assumptions that violate your context |

| Testing Expectations | Coverage requirements, testing frameworks, mock strategies | Gets you production-ready tests, not just happy-path examples |

| Security Practices | Authentication patterns, data handling rules, compliance requirements | Embeds security by default in AI suggestions |

| Documentation Standards | Comment styles, README structure, API doc formats | Maintains consistency across all generated documentation |

Here's a practical example of what an AGENTS.md might contain for a .NET microservices project:

# AI Agent Instructions - Order Processing Service

## Project Context

This is a .NET 8 microservices handling e-commerce order processing. We use:

- Clean Architecture with MediatR for CQRS

- Entity Framework Core with PostgreSQL

- RabbitMQ for async messaging

- xUnit for testing with FluentAssertions

## Code Generation Rules

### API Controllers

- Always use minimal APIs (not controller classes)

- Include OpenAPI/Swagger annotations

- Implement proper error handling with ProblemDetails

- Add correlation IDs to all responses

Example structure:

```csharp

app.MapPost("/api/orders", async (CreateOrderCommand command, ISender mediator) =>

{

var result = await mediator.Send(command);

return result.IsSuccess

? Results.Created($"/api/orders/{result.Value.Id}", result.Value)

: Results.BadRequest(new ProblemDetails { Detail = result.Error });

})

.WithName("CreateOrder")

.WithOpenApi();

```

### Testing Standards

- Every feature needs unit tests AND integration tests

- Use the AAA pattern (Arrange, Act, Assert)

- Mock external dependencies with NSubstitute

- Integration tests must use Testcontainers for database

- Minimum 80% code coverage for new code

### Domain Models

- Use record types for value objects

- Implement proper validation in constructors

- Never expose setters - use methods for state changes

- Include domain events for significant state transitions

## Security Requirements

- Never log sensitive data (credit cards, passwords, PII)

- All external inputs must be validated

- Use parameterized queries (EF Core handles this)

- Implement rate limiting on all public endpoints

Organization-Wide Prompt Libraries

For tasks beyond coding, such as architecture reviews, documentation generation, incident analysis, you need a different approach. These instructions don't belong in a single repository but be accessible across all projects.

Common organization-wide instruction categories:

- Architecture Decision Records (ADRs): Prompts that help teams document decisions consistently

- Code Review Checklists: Structured review guidance that AI can apply

- Incident Response: Templates for analyzing production issues

- Documentation Generation: Standardized approaches for API docs, runbooks, etc.

- Onboarding Materials: Prompts for generating project overviews for new team members

Here's an example of a reusable prompt for generating ADRs:

# ADR Generation Prompt

You are helping document an architecture decision. Generate an ADR following this structure:

## Context

- What problem are we solving?

- What constraints exist (technical, business, timeline)?

- What's the current state?

## Decision

- What did we decide?

- What are the key principles guiding this decision?

## Consequences

Positive:

- [List benefits]

Negative:

- [List drawbacks and risks]

Neutral:

- [List trade-offs]

## Alternatives Considered

For each alternative:

- Name

- Why it was rejected

- What we learned from evaluating it

## Implementation Notes

- Migration path (if applicable)

- Success metrics

- Review date

Format the output as markdown suitable for committing to /docs/adr/.

Making the Implementation Work

Rolling out shared instructions doesn't require a big-bang transformation. Here's a pragmatic path forward that won't disrupt your existing workflow.

Step 1: Start with One Project, One File

Pick your most active project and create an AGENTS.md file in the repository root. Start embarrassingly simple:

# AI Instructions

## Our Stack

- .NET 8

- PostgreSQL

- Docker

## Code Style

- Use C# 12 features

- Prefer records for DTOs

- Always include XML documentation for public APIs

## When Writing Tests

- Use xUnit

- Follow AAA pattern

- Name tests: MethodName_Scenario_ExpectedResult

Commit it. That's it. You're now doing shared instructions.

Step 2: Capture Wins as They Happen

When someone discovers a prompt pattern that works well, add it immediately. Don't wait for perfection. Did Sarah figure out how to get better database migrations from AI? Add it:

## Database Migrations

When generating EF Core migrations:

- Include both Up and Down methods

- Add comments explaining complex data transformations

- Consider existing data (not just schema)

- Include index creation for foreign keys

Step 3: Configure Your Tools

Most modern AI coding assistants support custom instructions. Here's how to wire them up:

For Cursor:

- Instructions in

.cursorrulesat project root are automatically loaded - Reference with

@docsin prompts

For Windsurf:

- Create

.windsurfrulesfile - Use cascade commands to apply specific instruction sets

For GitHub Copilot:

- While Copilot doesn't directly read instruction files, you can reference them in comments

- Use workspace-level instructions in VS Code settings

For Claude or ChatGPT (non-coding tasks):

- Maintain a shared document repository (Notion, Confluence, etc.)

- Create custom GPTs or Projects with pre-loaded instructions

- Use consistent naming:

[Team] - [Purpose](e.g., "Platform Team - ADR Generator")

Step 4: Create a Feedback Loop

Set up a lightweight review process:

// Example: Add a simple check to your CI pipeline

public class AgentInstructionsTests

{

[Fact]

public void AgentsFile_ShouldExist()

{

var agentsPath = Path.Combine(

Directory.GetCurrentDirectory(),

"AGENTS.md"

);

Assert.True(

File.Exists(agentsPath),

"AGENTS.md file is missing. AI instructions should be documented."

);

}

[Fact]

public void AgentsFile_ShouldBeRecentlyUpdated()

{

var agentsPath = Path.Combine(

Directory.GetCurrentDirectory(),

"AGENTS.md"

);

var lastModified = File.GetLastWriteTime(agentsPath);

var daysSinceUpdate = (DateTime.Now - lastModified).TotalDays;

Assert.True(

daysSinceUpdate < 90,

$"AGENTS.md hasn't been updated in {daysSinceUpdate} days. Consider reviewing."

);

}

}

Step 5: Measure and Iterate

Track simple metrics to validate the approach:

- Time to onboard new developers (should decrease)

- Consistency in AI-generated code (measure through code review comments)

- Prompt reuse (how often do people reference shared instructions?)

Create a monthly ritual: Review the instructions, remove what's not being used, expand what's working.

Advanced Patterns: When Basic Isn't Enough

Once you've got the basics humming, consider these power moves:

Context-Specific Instructions: Use directory-level instruction files for different parts of your codebase:

/src/api/AGENTS.md # API-specific patterns

/src/domain/AGENTS.md # Domain modeling guidelines

/tests/AGENTS.md # Testing-specific instructions

Instruction Composition: Reference other instruction sets:

# Payment Service Instructions

Include: /docs/shared-instructions/security-baseline.md

Include: /docs/shared-instructions/logging-standards.md

## Service-Specific Rules

[Your specific additions here]

Version-Controlled Prompt Templates: Store reusable prompts as files:

/prompts/

/code-review/

security-check.md

performance-review.md

/documentation/

api-docs.md

readme-generator.md

/testing/

integration-test.md

load-test-scenario.md

Team-Specific Customization: Different teams can extend base instructions:

# Frontend Team Extensions

Base: /AGENTS.md

## Additional Rules

- Use React 18 with TypeScript

- All components must have Storybook stories

- Accessibility: WCAG 2.1 AA compliance required

The Compounding Returns

When you shift from individual prompting to shared instructions, several things happen simultaneously:

Faster Onboarding: New team members should not be expected to figure out your AI interaction patterns without clear documentation and instruction. They get the playbook on day one. That junior developer who joined last week? They're getting the same quality AI assistance as your senior architect.

Consistent Quality: AI-generated code stops feeling like a grab bag of styles and approaches. Whether Alice or Bob asks for a new API endpoint, it follows the same patterns, includes the same error handling, and matches the same standards.

Knowledge Multiplication: When one person discovers a better prompt pattern, everyone upgrades instantly. That's exponential improvement and your team's collective AI expertise compounds.

Reduced Review Friction: Code reviews become faster because AI-generated code already aligns with team standards. Reviewers spend less time on style nitpicks and more time on architectural concerns.

Your Move

Here's what matters:

- Start small: One file, one project, today. Perfect is the enemy of shipped.

- Capture wins immediately: Don't let good prompts evaporate. Commit them when they're fresh.

- Make it visible: Shared instructions only work if people know they exist. Reference them in onboarding, link them in PR templates.

- Iterate ruthlessly: Review monthly, remove cruft, expand what works. These aren't stone tablets.

The question is whether your team will develop AI fluency as a shared competency or as a collection of individual skills that never quite add up to something greater. What's one prompt pattern your team keeps rediscovering? That's your starting point. Capture it, share it, and watch what happens when everyone has access to your best AI interactions. How will you know when shared instructions are working? When you stop hearing "How did you get it to do that?" and start hearing "I updated our instructions based on what I learned today."

Share this article

Related articles

Automate or Fall Behind: Why Continuous Compliance Is Non-Negotiable

Stop treating compliance as a quarterly fire drill. By embedding automated policy checks, SBOM generation, and evidence collection directly into your CI/CD pipeline, you can stay continuously audit-ready, catch violations before they ship, and spend less time reconstructing history at 3 AM.

Building Better Stories: Architecture That Actually Engages

The best architecture means nothing if no one listens. Architects are trained to think in systems and abstractions, but the people they need to convince think in stories. Learn how to use a five-act structure, concrete examples, analogies, and audience mapping to turn your technical decisions into narratives that keep stakeholders engaged and get your proposals approved.

Reuse Over Custom Code: Why Solution Architects Must Break the Developer Mindset

Discover why reusing existing solutions before buying commercial tools and building custom ones can save your team time, money, and resources.

Enjoyed this article?

Subscribe to get more insights delivered to your inbox monthly

Subscribe to Newsletter