How to Stop One Service from Burning Down the House

It was just past midnight when my phone buzzed. Again.

"Payment service failure. Error rate: 96%. Retry storm detected."

I was leading the architecture team of a retail tech platform with millions of daily users. We were in the middle of our summer sales campaign, traffic was peaking, and all eyes were on system stability. A minor third-party payment gateway hiccup had escalated into a full-blown meltdown. Shopping carts froze. Transactions stalled. The user experience team was already fielding complaints on Twitter.

The root cause? Not the gateway itself, but our system's reaction to it. We had built something fast and feature-rich, but not fault-tolerant. That night, I learned a hard but transformative lesson about the Circuit Breaker pattern.

The Cost of Blind Trust in Distributed Systems

Without a circuit breaker in place, your system becomes dangerously optimistic. It assumes dependencies will always recover quickly, that retries will eventually succeed, and that pushing harder will solve the problem. But in distributed systems, this behavior often amplifies failure instead of containing it. You may see:

- Exponential retry storms, overwhelming the failing service and adjacent components.

- Thread exhaustion, where waiting connections block healthy threads from doing useful work.

- Client-side timeouts, escalating to user-facing outages.

- And worst of all, cascading failures that propagate like fire across microservices, bringing down not just one component, but the entire ecosystem.

In essence, not using a circuit breaker is like removing the fuse from your power box: when things go wrong, everything burns instead of just tripping the circuit. That’s why smart architecture anticipates failure by designing for resilience. The circuit breaker ensures your system can bend without breaking.

Applying the Circuit Breaker in Modern Architectures

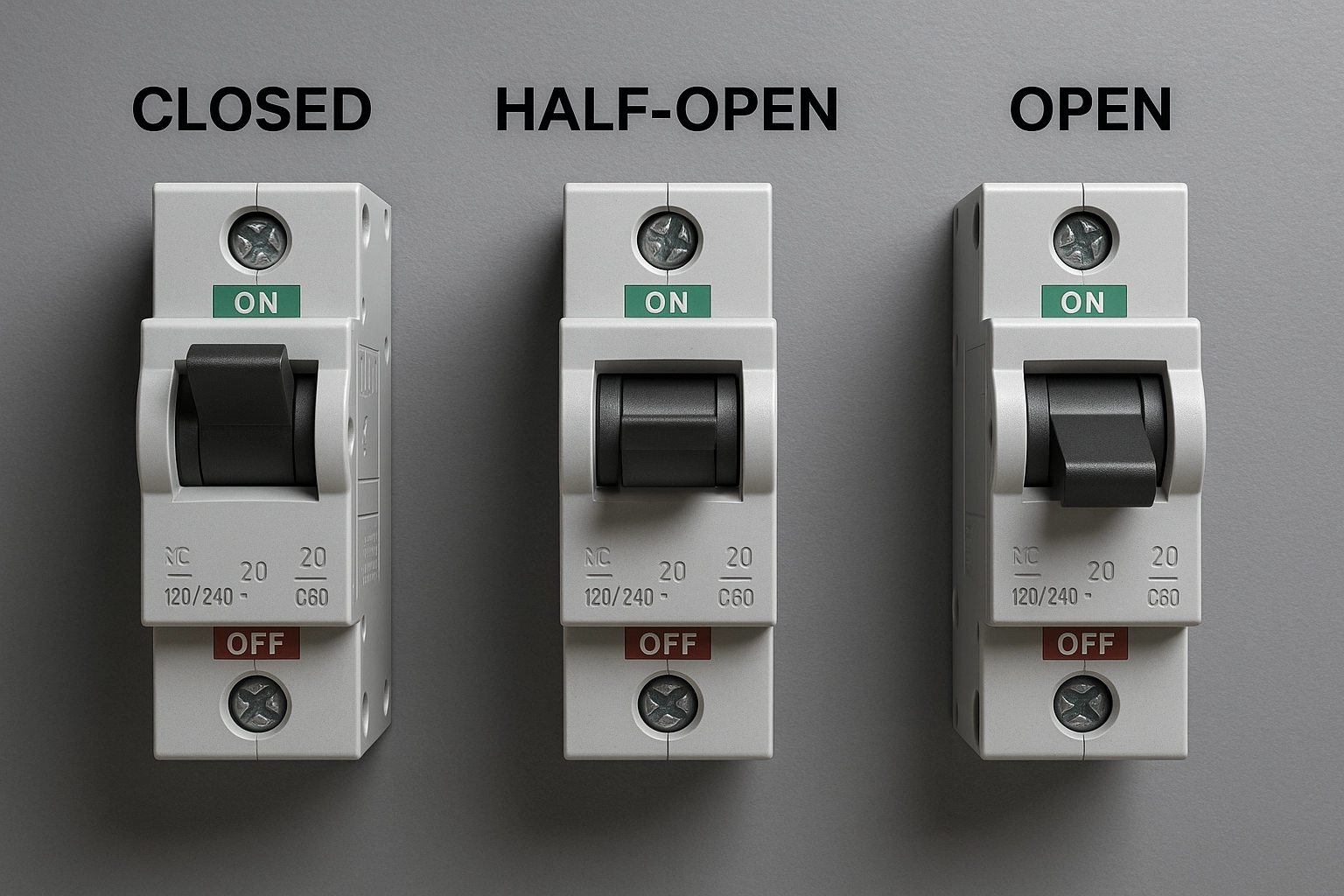

Before diving into implementation, it's important to understand the foundational behavior of the Circuit Breaker pattern. Much like its namesake in electrical engineering, the goal is to prevent damage from sustained failure by interrupting the flow of requests when things go wrong. In software systems, this pattern helps isolate failures and gives downstream services time to recover. It's especially powerful in microservices architectures, where one unstable service can easily impact many others if left unchecked.

At a high level, the pattern consists of three states:

🟩 Closed State

In the Closed state, the circuit breaker allows all requests to flow through to the target service. Everything appears healthy, and the system behaves as expected. However, behind the scenes, it’s monitoring response times, exceptions, and error rates over a sliding window. This observation establishes the baseline for determining when things go wrong. If the failure rate stays below the defined threshold, the circuit remains closed. But once the threshold is breached, typically based on a percentage of failed requests or timeouts, the breaker trips.

🟥 Open State

When the breaker transitions to the Open state, it effectively cuts off traffic to the downstream service. All incoming requests are blocked immediately, either returning a predefined fallback response or an error. It’s a protection. The goal is to prevent the failing service from being overwhelmed by retries and to stop cascading failures from reaching other parts of the system. During this time, the circuit breaker starts a cooldown timer, allowing the troubled service time to recover.

🟨 Half-Open State

Once the cooldown period expires, the breaker moves into the Half-Open state. It’s a testing phase. Instead of fully reopening the floodgates, the breaker allows a small number of trial requests through. If those requests succeed, the circuit is considered healthy again and transitions back to Closed. But if any of those test requests fail, the breaker immediately flips back to Open, reinitiating the cooldown cycle. This state ensures that your system doesn't overcommit too early, providing a controlled way to validate recovery without risking further instability.

Where It Fits in Your Architecture

Implementing a circuit breaker is about knowing where and how to apply it for maximum impact. This pattern is most useful when your system depends on external services or components that may become unresponsive, slow, or intermittently unavailable:

- Calling third-party APIs such as payment gateways, geolocation services, and email/SMS providers.

- Microservice communication, especially over HTTP or gRPC, where transient failures are common.

- Protect application logic when database connections or queries begin to fail.

- Enforce resilience across unpredictable, autoscaling environments for cloud-native apps.

Depending on your tech stack, operational model, and level of abstraction, there are a variety of tools and libraries available to implement circuit breakers. Ranging from in-process libraries that offer fine-grained control to infrastructure-level solutions integrated into service meshes or API gateways. The right choice often depends on whether you’re implementing resilience at the application level, the platform level, or as part of a broader service orchestration strategy. Below are some of the most commonly used and battle-tested options across ecosystems:

| Platform | Library / Tool |

|---|---|

| Java (Spring) | Resilience4j - Lightweight, modular, and built for Spring Boot |

| .NET | Polly - Fluent, flexible fault-handling policies |

| Node.js | Opossum - Effective circuit breaker for async Node.js apps |

| Service Mesh | Istio / Envoy - Built-in circuit breaking for outbound traffic in service-to-service calls |

| AWS | API Gateway + Lambda - Throttling and retry configurations with exponential backoff |

| Azure | API Management + App Gateway - Retry and timeout policies configurable via policies and rules |

To get the most value from a circuit breaker, you’ll need to not only implement it correctly but also tune its parameters and actively observe its behavior in real-world conditions:

-

Fallbacks: Always pair a circuit breaker with graceful degradation or cached responses.

-

Observability: Use OpenTelemetry, Prometheus, or vendor-native tools to track circuit state changes.

-

Configuration Tuning:

- Failure threshold: % of failed requests before opening.

- Cooldown period: How long to wait before trying again.

- Timeouts: How long to wait for a response before treating it as failed.

Payment Service Recovery with Polly

Let’s revisit the outage that sparked our circuit breaker journey. But this time, through the lens of a .NET-based system using Polly. At the core of our checkout workflow was a payment orchestration service built in ASP.NET Core. It acted as the broker between our application and two external payment gateways. During a flash sale event, one of those gateways began to fail, introducing latency, timeouts, and eventually HTTP 500s. Because we had no protective mechanisms in place, our application kept hammering the gateway with retries, consuming threads, bloating queues, and triggering timeouts further upstream.

Once we identified the failure pattern, we introduced a Polly circuit breaker policy into the HTTP client pipeline of our orchestration service. We wrapped the outbound HTTP calls to the payment provider using a circuit breaker policy like this:

using Microsoft.Extensions.DependencyInjection;

using Microsoft.Extensions.Hosting;

using Polly;

using Polly.Extensions.Http;

var builder = Host.CreateApplicationBuilder(args);

// Register an HttpClient with a Polly Circuit Breaker policy

builder.Services.AddHttpClient("ResilientClient")

.AddPolicyHandler(GetCircuitBreakerPolicy());

builder.Services.AddHostedService<App>();

await builder.Build().RunAsync();

// Define the Polly v8+ Circuit Breaker policy

IAsyncPolicy<HttpResponseMessage> GetCircuitBreakerPolicy()

{

return HttpPolicyExtensions

.HandleTransientHttpError()

.CircuitBreakerAsync(

handledEventsAllowedBeforeBreaking: 5,

durationOfBreak: TimeSpan.FromSeconds(30),

onBreak: (outcome, breakDelay) =>

{

Console.WriteLine($"\n⚡ Circuit open! Reason: {outcome.Exception?.Message ?? outcome.Result?.StatusCode.ToString()}. Breaking for {breakDelay.TotalSeconds} sec.\n");

},

onReset: () =>

{

Console.WriteLine("\n✅ Circuit reset. Traffic allowed.\n");

},

onHalfOpen: () =>

{

Console.WriteLine("\n🟡 Circuit is half-open. Trying test request...\n");

});

}

Once Polly was integrated into our payment orchestration service, we immediately saw more controlled behavior under failure conditions. When five consecutive failures occurred within a short time frame, the circuit breaker tripped. This halted all traffic to the failing payment provider for a 30-second cooldown period, allowing the downstream system to recover without being overwhelmed.

After the cooldown, Polly transitioned into a Half-Open state and allowed a single test request to probe the external service. If the request succeeded, the breaker returned to the Closed state, resuming normal operations. If it failed, the breaker re-entered the Open state, extending the isolation period. In parallel, we implemented fallback logic: if the primary provider was unavailable, requests were rerouted to a secondary gateway. If no fallback was possible, the service returned a well-structured failure response, preventing retry loops at the front end.

To support operations and observability, we wired Polly’s circuit breaker events into our logging infrastructure and exposed metrics to Prometheus. These metrics were visualized in Grafana, giving the SRE team real-time insight into breaker state transitions.

The Wisdom in Failure

The Circuit Breaker pattern reminds us that sometimes the best response is to stop trying, step back, and let the system recover. In modern distributed systems, failure is inevitable. What matters is how we contain it. Without circuit breakers, a minor glitch in one service can escalate into a full-blown outage across multiple components. With them, we create boundaries, zones of safety that prevent a failing part from bringing everything down. As solution architects, our job is to build systems that know when to press pause. That night, the system fought back. But now, we’re fighting smarter.